Today is DNA Day! This year it’s an especially big deal as we’re honoring the 60th anniversary of Watson and Crick’s famous discovery of the double-helix structure of DNA as well as the 10th anniversary of the completion of the Human Genome Project.

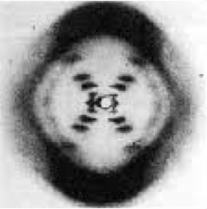

Back when Watson and Crick were poring over Rosalind Franklin’s DNA radiograph, they never could have imagined the data that would ultimately be generated by scientists reading the sequence of those DNA molecules. Indeed, even 40 years later at the start of the HGP, the data requirements for processing genome sequence would have been staggering to consider.

Back when Watson and Crick were poring over Rosalind Franklin’s DNA radiograph, they never could have imagined the data that would ultimately be generated by scientists reading the sequence of those DNA molecules. Indeed, even 40 years later at the start of the HGP, the data requirements for processing genome sequence would have been staggering to consider.

Check out this handy guide from the National Human Genome Research Institute presenting statistics from the earliest HGP days to today. In 1990, GenBank contained about 49 megabases of sequence; today, that has soared to some 150 terabases. The computational power needed to tackle this amount of genomic data didn’t even exist when the HGP got underway. Consider what kind of computer you were using in 1990: for us, that brings back fond memories of the Apple IIe, mainframes, and the earliest days of Internet (brought to us by Prodigy).

A couple of decades later, we have a far better appreciation for the elastic compute needs for genomic studies. Not only do scientists’ data needs spike and dip depending on where they are in a given experiment, but we all know that the amount of genome data being produced globally will continue to skyrocket. That’s why cloud computing has become such a popular option for sequence data analysis, storage, and management — it’s a simple way for researchers who don’t have massive in-house compute resources to go about their science without having to spend time thinking about IT.

So on DNA Day, we honor those pioneers who launched their unprecedented studies with a leap of faith: that the compute power they needed would somehow materialize in the nick of time. Fortunately, for all of us, that was a gamble that paid off!